I was taught in school to set my “input levels” as high as possible (without clipping, of course). However, I learned later on that when it comes to recording levels: digital recording isn’t the same as analog recording.

Contrary to popular belief, setting your levels as high as possible will NOT give you the best results. Back in the days of analog recording, sound engineers did so as a tactic to combat the “noise floor”. The signal-to-noise-ratio was much more of an issue than it is with today’s digital hardware. As you read through this article, you’ll realize that there are NO advantages to setting your levels as high as possible. I will be sharing what I believe to be the optimal recording level based on some extensive research. There were actually TWO reasons that convinced me, so I hope they’ll justify what I have to say. If you’ve ever been debating the issue yourself, this text will definitely provide some relief. Let’s get started!

- Setting recording levels to optimize the signal-to-noise ratio

- dBVU meters vs dBFS meters

- Optimizing your signal using VU meters

- Setting recording levels to -18 dBFS: 2 reasons

- Setting recording levels for digital recording

Setting recording levels to optimize the signal-to-noise ratio

Inherent to electronic circuitry is the presence of what we refer to as the noise floor. By measuring our signal’s amplitude against the noise floor, we obtain what’s known as the signal-to-noise-ratio.

Back in the days of analog recording, achieving the best signal-to-noise ratio was challenging.

However, the noise floor has become virtually inaudible since the advent of digital recording. In other words, we no longer need to set our levels “as high as possible” to compensate, BUT…

This would be important if you were using analog amplification.

You’d need to adjust the gain for each point of amplification to minimize noise and distortion (clipping). This is what we refer to as gain staging OR gain structuring.

However, unless you were using an analog mixer and/or a tape machine, you won’t need to be as tedious with this process.

In the digital world, the only thing we need to avoid clipping is our analog to digital converters (ADC).

However, once your signal has reached your digital audio workstation (DAW), it may still have accumulated noise from…

- Microphones

- Pre-amplifiers

- Instrument pickups

- Unbalanced cable runs

- Effects processors

- Amplifiers

As you can see, each ingredient we add to the mix has the potential of introducing noise. This is because everything that happens BEFORE your audio interface is technically analog.

For now though, we’re only focusing on setting our interface’s levels appropriately.

dBVU meters vs dBFS meters

Analog equipment can actually benefit from setting levels as high as possible. If you’ve ever used valve/tube amplifiers, you know that pushing the gain can distort it in a pleasant way.

In other words, clipping ever so slightly in the analog world produces what we refer to as saturation.

However, clipping in the digital world will produce anything but favourable results. That’s why setting your levels as high as possible has NO advantage.

If anything, you’ll actually encounter TWO major problems by doing so (more on this later).

The first thing we need to understand is that dBFS metering wasn’t always the standard for measuring amplitude. Back in the day, sound engineers were measuring amplitude using dBVU meters.

You know… the one with the bouncing needle.

Here’s the shocking truth though… 0 dB VU ≠ 0 dB FS

Actually, most analog equipment was calibrated to average -18 dB FS.

What does this mean?

Well, it appears that sound engineers had been recording at much lower levels than we expected. Does this mean that we should be setting our input levels to average -18 dB?

Optimizing your signal using VU meters

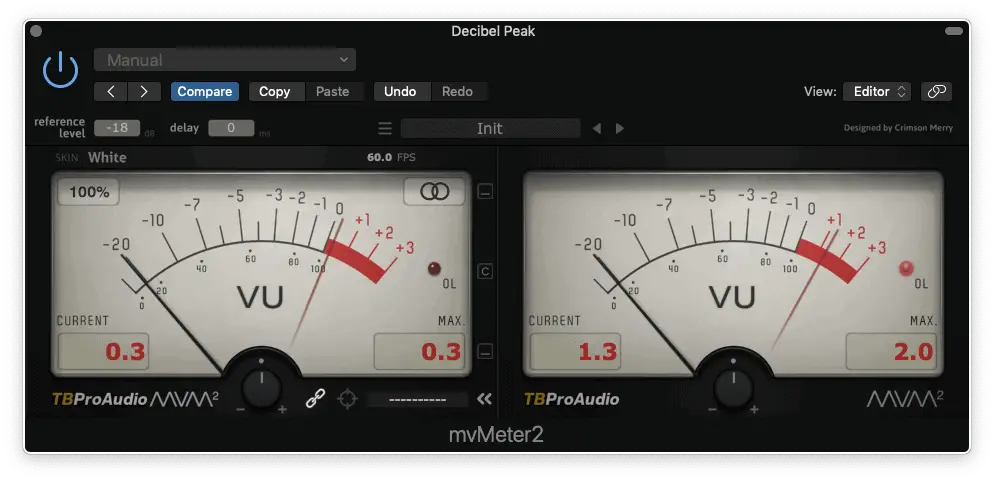

To answer the previous question… YES, we should be setting our input levels to average -18 dBFS. However, this does not mean that your track cannot peak any higher than -18 dBFS.

Keep in mind that dBVU meters use RMS (root mean square) to calculate the AVERAGE amplitude.

In other words, you can have your tracks peaking at -6 dBFS, but the average amplitude will remain -18 dBFS. So, how can you start metering your tracks using this method?

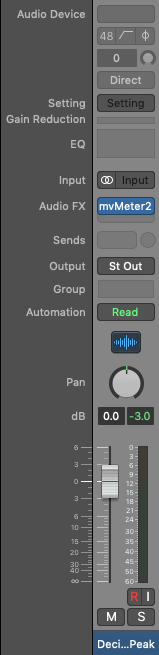

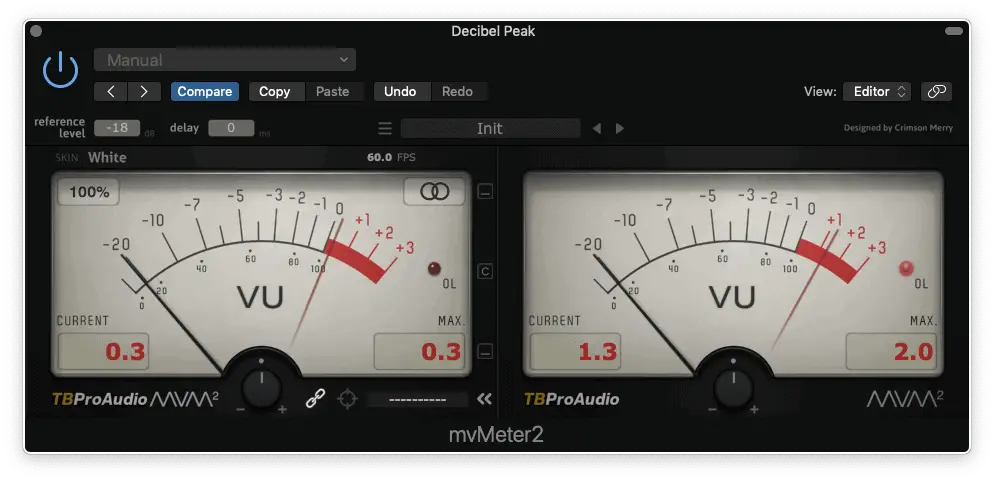

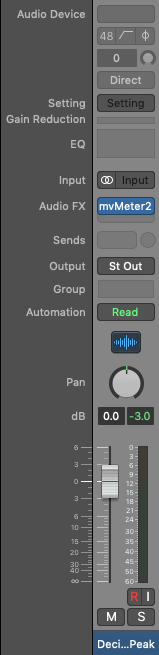

Although there are many dB VU meter plug-ins out there, the mvMeter2 from TBProAudio is my favourite. Did I mention that it’s FREE?

Have you got your dBVU meter plug-in yet? Great, let’s get started!

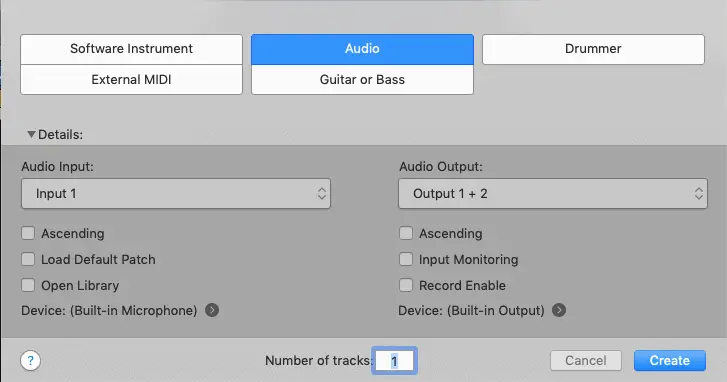

Step 1 | Create an audio track

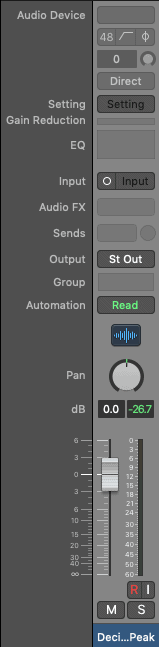

Step 2 | Set your track’s fader to unity gain (aka 0 dBFS)

Step 4 | Insert a dBVU meter in effects slot 1

Step 5 | Calibrate your plugin to -18 dBFS (this should be done by default)

Step 6 | Set your preamp’s levels to average 0 dBVU (it’s okay if it goes over a little)

It’s as simple as that! Repeat this process for ALL your tracks… ALWAYS.

But what if I’m working with a track that has been recorded over this threshold? If you’re mixing other peoples’ work, this will most likely be the case (unless they’re professionals).

You’ll instinctively want to lower your mixer’s faders, but this will only affect the output.

There’s an easy fix to this common problem…

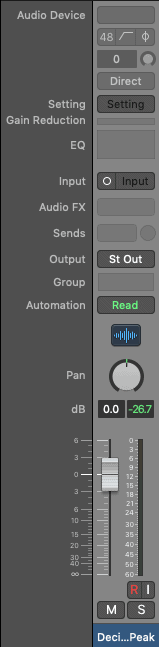

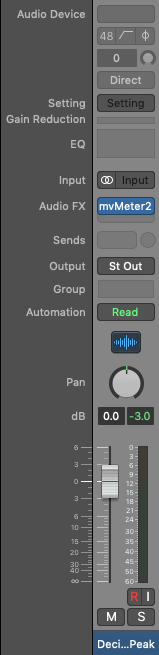

Step 1 | Set your track’s fader to unity gain (aka 0 dBFS)

Step 2 | Insert a dBVU meter in effects slot 1

Step 3 | Calibrate your plug-in to -18 dB FS (this should be done by default)

Step 4 | Set your track’s clip gain to average 0 dBVU (it’s okay if it goes over a little)

You can also use a trim plugin or a dBVU meter that includes this feature. This will be affecting your signal’s input, which is what we need.

But now we need to answer the BIG question… Why is all of this so important?!

Setting your recording levels to -18 dBFS: 2 reasons

Now, I’m sure you’re begging to know why -18 dB is so important. I mean, why can’t we record at -6 dBFS like we’ve been taught in school? You can let me know in the comments if you agree or disagree!

Reason #1

Plug-ins that emulate analog equipment have an optimal “nominal operating level”. In English, this simply means that if you exceed a certain threshold, they won’t sound as good.

If you don’t believe me, read page 17 of the instruction manual for Slate Digital’s Virtual Tape Machines.

Remember, analog equipment uses 0 dBVU which is usually the same as -18 dBFS on average. In other words, tracks that exceed this level will distort (clip) your plug-ins.

You may not notice a difference if you’re using standard digital plugins, but there’s another problem…

Reason #2

By setting your levels as high as you can, you’ll be clipping your master bus one you’ve got multiple tracks. As I mentioned before, you’ll instinctively want to bring down your mixer’s faders, BUT…

The further away you move from “unity gain”, the less resolution you’ll get from your fader.

You may have noticed that your meter isn’t linear, it’s logarithmic. This one starts in increments of 3, then moves up to 5 and finally to 10.

This’ll become a problem during the automation stage… Instead of increments of say, 1 dBFS, you’ll be working in increments of 10 dBFS. In other words, you won’t have as much accuracy as you would have by staying in the top portion of your fader.

Are you convinced yet?

Setting recording levels for digital recording

I remember hearing about this shocking truth a couple of years ago. I wanted to believe it, but I needed to have some concrete evidence.

These were the TWO reasons that convinced me to make the transition to -18 dBFS.

However, you could technically record at any amplitude as long as you don’t clip your converters. You could always reduce the volume in your recording software, right?

I used to work this way, but I can’t imagine myself ever looking back. I mean, we now know that there are NO advantages to recording “hotter” in the digital world.

Only disadvantages…

So, hopefully this has given you a new perspective on sound recording! What do you think about recording at -18 dB FS, does it resonate with you? Let us know in the comments and feel free to share your personal approach to setting levels.